In industries like autonomous driving, robotics, and smart infrastructure, LiDAR data serves as the foundation of perception systems that help machines understand the physical world. But the performance of those systems depends on more than just collecting large datasets. It depends on how accurately those datasets are labeled.

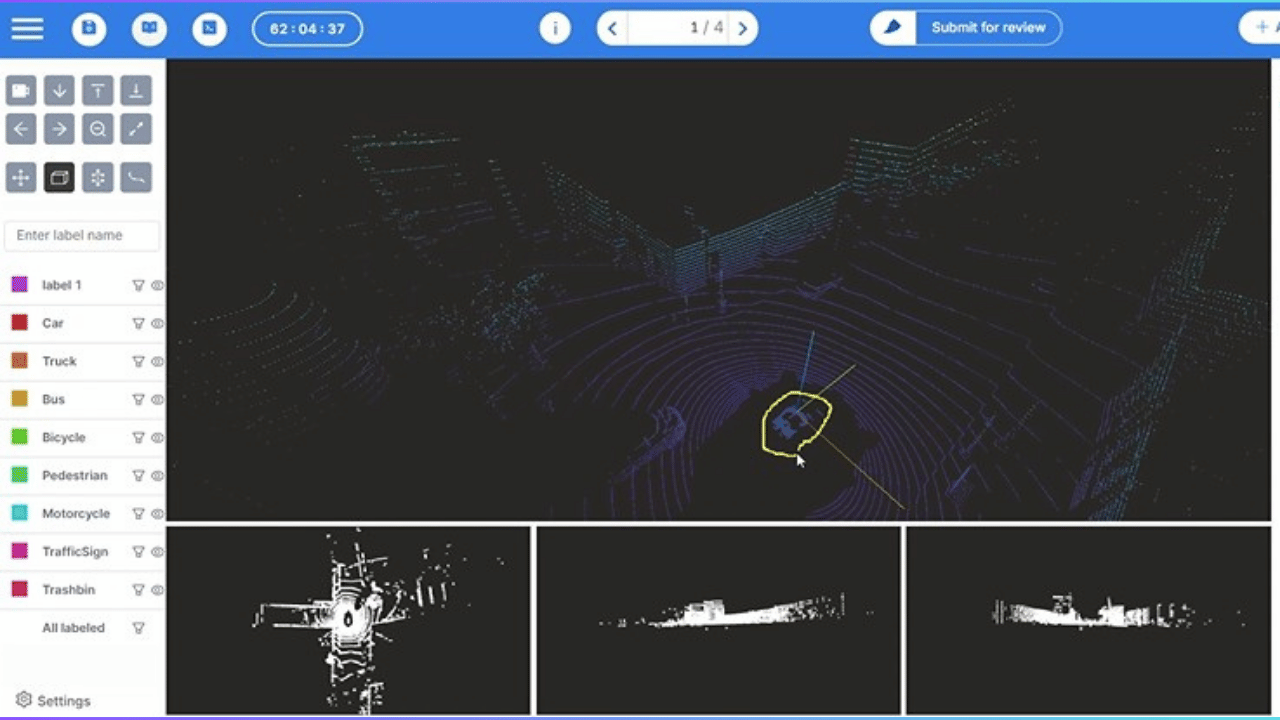

For teams building perception models, cuboids are the primary way to define objects in LiDAR point clouds. They establish where a vehicle, pedestrian, cyclist, or obstacle exists in three-dimensional space. If those cuboids are even slightly inconsistent or misaligned, the resulting dataset introduces label noise that can weaken model performance and reliability.

Many teams assume that improving LiDAR accuracy requires collecting more data or tweaking the model. In reality, annotation workflows play an equally critical role. The creation, adjustment, and standardization of cuboids among annotators can significantly impact the quality of the training data.

Modern annotation platforms are starting to solve this problem by making it easier for teams to create labels that are cleaner, faster, and more consistent. By rethinking how cuboids are generated and managed, organizations can significantly improve LiDAR annotation accuracy while reducing the time and effort required to produce high-quality datasets.

Why Accuracy Matters for LiDAR Models

LiDAR-based perception systems rely heavily on labeled point cloud datasets to train object detection models. In these datasets, cuboids are used to define the boundaries of objects such as vehicles, pedestrians, cyclists, and infrastructure elements. Each cuboid represents an object’s position, orientation, and spatial footprint within the point cloud.

When cuboids are precisely placed, the model learns to recognize patterns that correspond to real-world objects. However, inconsistent or inaccurate cuboids introduce ambiguity into the training data. Over time, these small inaccuracies accumulate and affect how the model interprets spatial relationships between objects.

For example, if cuboids are slightly oversized, the model may learn that objects occupy a larger space than they actually do. If cuboids are misaligned or rotated incorrectly, the model may struggle to understand object orientation. Even small inconsistencies among annotators can lead to variability in object representation, ultimately reducing model reliability.

These issues become particularly important in applications where perception accuracy is critical. For example, in self-driving cars, the model needs to be able to tell the difference between different types of objects and know where they are in relation to the car. If left unchecked, the vehicle can misinterpret its surroundings and can even affect the safety of autonomous vehicles, potentially leading to accidents or collisions. Clean and consistent cuboid annotations allow the model to learn these spatial patterns accurately.

Improving LiDAR model performance, therefore, begins with improving how cuboids are created during annotation. When cuboid creation workflows are standardized and optimized, teams can produce datasets that are both more accurate and more consistent—leading to stronger downstream model performance.

Flexibility in Cuboid Annotation

In real-world point clouds, objects rarely appear in perfectly uniform shapes or orientations. Vehicles may be partially occluded, pedestrians may appear at different angles, and sensor noise can make object boundaries difficult to interpret.

Because of this variability, annotators need to be able to adjust cuboids so that they accurately show the shapes of the objects in the scene. Small changes to cuboid size, orientation, or placement can significantly affect how well the annotation represents the real-world object.

Without flexible cuboid annotation, labels can quickly become inconsistent across datasets, introducing noise that affects model training.

However, achieving this level of flexibility through purely manual cuboid adjustments becomes increasingly difficult as datasets scale.

Issues with traditional annotation workflows

Despite the importance of accuracy, many annotation workflows still rely heavily on manual cuboid placement and adjustment. Annotators typically draw a cuboid around an object and then manually adjust its size, orientation, and position to match the point cloud.

While this approach works for small datasets, it becomes increasingly problematic as projects scale.

- First, manual cuboid creation can be time-consuming and cognitively demanding. Annotators must repeatedly estimate object boundaries in point clouds that may be sparse, partially occluded, or noisy. Over time, this leads to fatigue, increasing the likelihood of labeling inconsistencies.

- Second, manual workflows often result in variability between annotators. Two annotators may interpret the same object differently, leading to slightly different cuboid sizes or placements. Even small differences accumulate across thousands of annotations, creating variability within the dataset.

- Third, traditional workflows slow down dataset production. As LiDAR datasets grow larger—often containing millions of frames—manual annotation becomes a bottleneck that delays model training and experimentation.

These challenges have led many teams to rethink how cuboids are created during annotation. Instead of relying solely on manual drawing and adjustment, modern workflows use tools that guide cuboid creation, helping annotators generate accurate labels more quickly and consistently.

By improving the cuboid creation process itself, organizations can reduce annotation friction while simultaneously improving dataset quality.

Workflow Improvements That Increase Accuracy

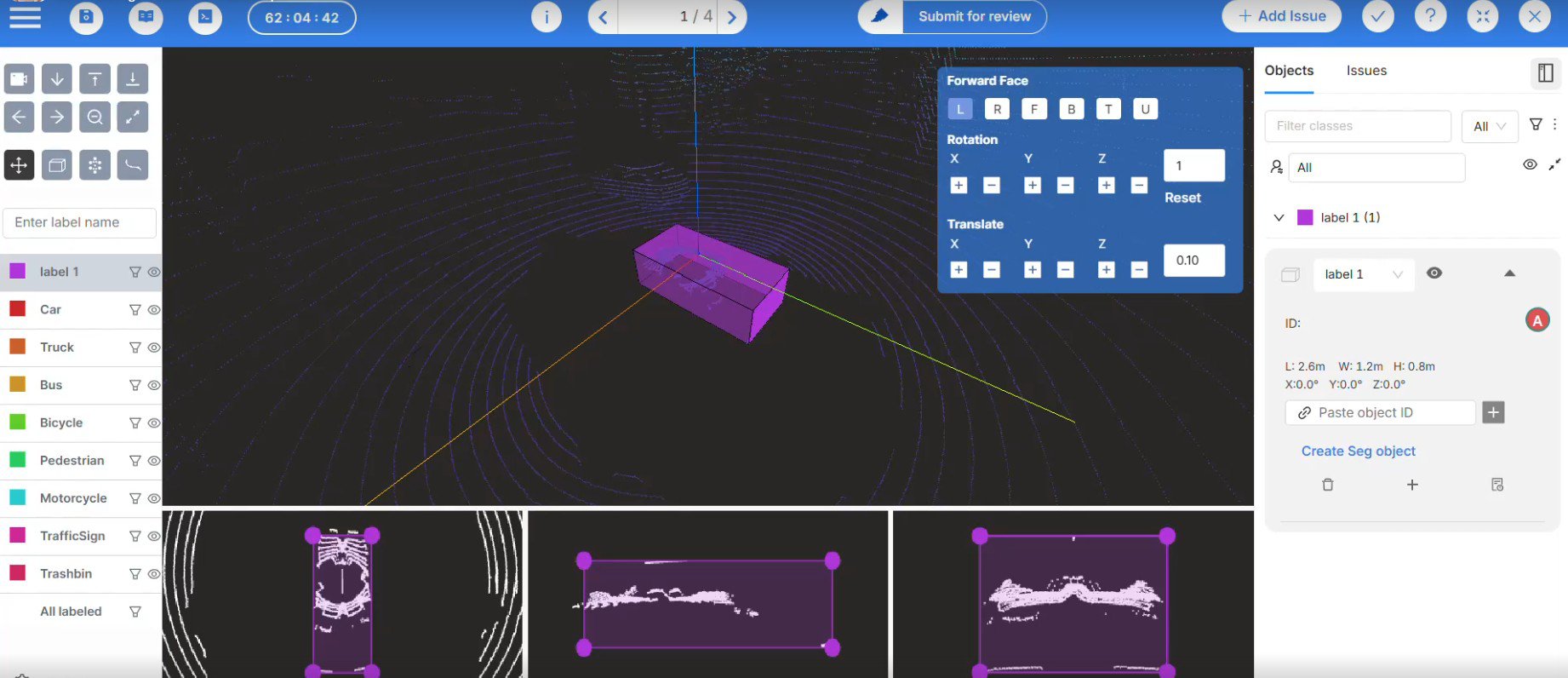

Improving LiDAR annotation accuracy often starts with improving the way cuboids, which are three-dimensional rectangular boxes used to represent objects in space, are generated and adjusted during the labeling process. Modern annotation tools introduce workflows that help annotators create consistent cuboids more efficiently, reducing manual effort while improving label quality.

Below are several workflow improvements that can significantly enhance accuracy in LiDAR datasets

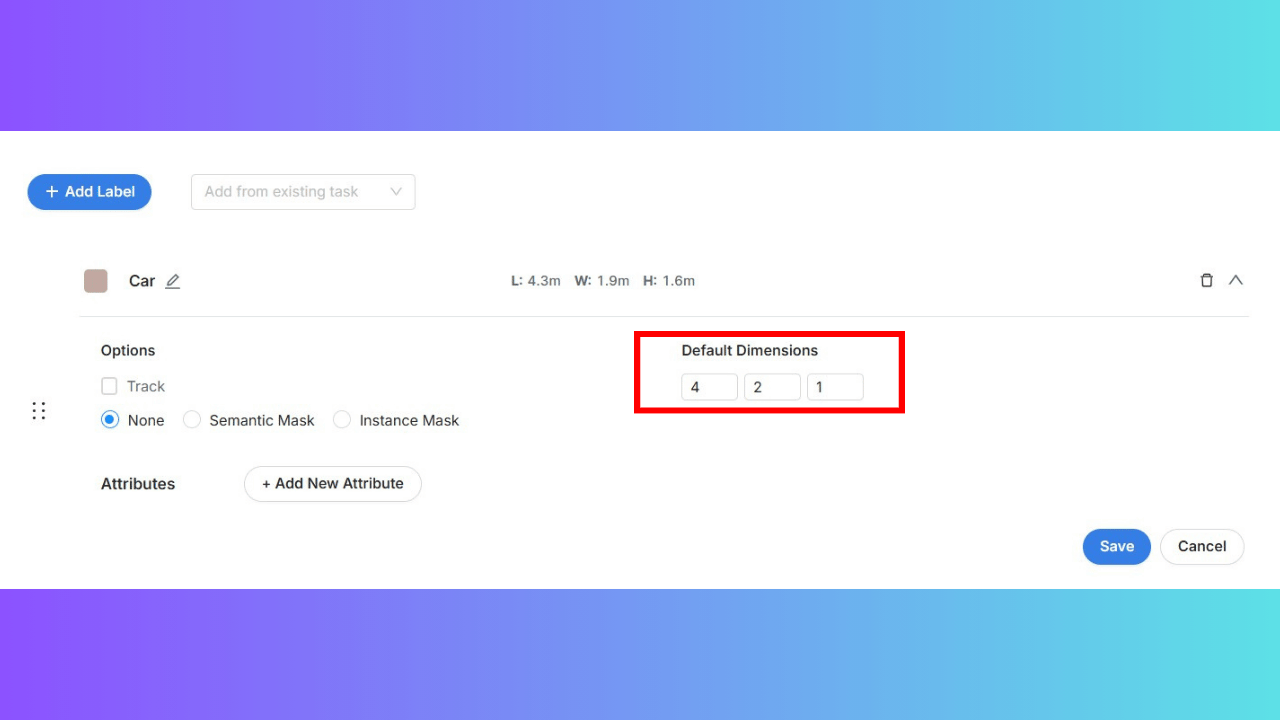

- Predefined Cuboid Sizes for Dataset Consistency

In many LiDAR datasets, certain object categories—such as cars, pedestrians, or traffic cones—have relatively predictable size ranges. However, when annotators manually adjust cuboids for every object, inconsistencies can arise.

One way to address this problem is through predefined cuboid templates. Annotators can place a cuboid of a predefined size at the location of an object and then make minor adjustments if necessary. This workflow helps standardize object dimensions across the dataset while speeding up the annotation process.

Mindkosh enables this through its default-sized cuboid feature, which can be configured when setting up labeling classes. Annotators can place these cuboids with a single click, ensuring consistent object dimensions while minimizing repetitive adjustments.

For large datasets, predefined cuboid sizes help maintain consistency across annotators and reduce the time required to label common object types.

Another feature that complements this in Mindkosh for cuboid creation is the locked dimensions feature. In sequential point cloud data, objects are often only clearly visible in a few frames, making it difficult to maintain consistent cuboid dimensions across the track.

Instead of changing the size of cuboids in each frame, annotators can set the right size for the object in the frame where it is most visible and use that size in all frames.

With locked dimensions in Mindkosh, any change made to a cuboid’s size in one frame is automatically applied to the entire track—ensuring consistent object representation while reducing manual effort.

- Using Point Selection to Capture Object Boundaries

Point clouds often contain irregular or sparse data, making it difficult to estimate the true boundaries of an object. Annotators may struggle to visually determine the correct cuboid size when objects are partially occluded or when point density is uneven.

A workflow that improves this process is point-based object selection. Instead of guessing the object's boundaries first, annotators can outline the points that belong to it. The system then generates a cuboid that encompasses those selected points.

This approach aligns the cuboid more closely with the actual spatial distribution of the object within the point cloud.

Mindkosh supports this workflow through its lasso tool, which allows annotators to outline the points representing an object. The platform then generates a cuboid that fits the selected region, helping ensure the cuboid reflects the true object boundaries.

By using the real point data to create cuboids, annotators can make labels that more accurately show real-world objects.

- One-Click Cuboid Creation for Faster, More Accurate Labeling

In traditional annotation workflows, annotators manually draw a cuboid and then adjust its orientation and dimensions. This process is repetitive and increases the likelihood of inconsistencies between annotations.

One way to address this challenge is through automated cuboid generation. With a one-click annotation approach, the platform automatically creates an oriented cuboid around the selected object. Instead of manually drawing each cuboid from scratch, annotators can quickly generate a correctly oriented cuboid and then make small adjustments if needed.

This approach significantly reduces the time required to annotate each object while improving consistency across the dataset.

On Mindkosh, the 1-click annotation feature allows annotators to generate an oriented cuboid instantly. By automating the initial cuboid creation step, teams can produce more consistent annotations while reducing manual workload.

A Checklist for Building High-Accuracy LiDAR Annotations

Improving LiDAR annotation accuracy requires more than individual features—it requires a well-designed cuboid annotation workflow. Teams can produce datasets that better reflect real-world conditions and support stronger model performance when they structure annotation processes to prioritize both flexibility and consistency.

Organizations building high-quality LiDAR datasets often follow a few key practices that help ensure cuboids are placed accurately and consistently across large datasets.

- Standardize cuboid creation workflows.

Using consistent methods for creating cuboids helps reduce variability between annotators. Guided cuboid creation tools—such as one-click cuboid generation or predefined cuboid templates—can help teams maintain consistency across datasets. - Enable flexible cuboid customization.

Annotators should be able to adjust cuboid size, orientation, and placement to match the true geometry of objects in the point cloud. Flexibility ensures that cuboids capture object boundaries most accurately. - Use guided cuboid generation to reduce manual effort.

Tools such as point-selection workflows or automated cuboid placement allow annotators to generate cuboids more efficiently while maintaining accuracy. This reduces repetitive adjustments and improves consistency across large datasets. - Define clear labeling guidelines.

Well-defined annotation guidelines help teams maintain consistency when labeling different object types. Clear rules around object boundaries and cuboid placement reduce ambiguity during annotation. - Regularly review dataset quality.

Quality assurance processes help identify inconsistencies early. Reviewing annotations at regular intervals ensures cuboids remain accurate and aligned with labeling standards.

By combining flexible cuboid creation, guided workflows, and strong annotation practices, organizations can significantly improve LiDAR dataset quality while scaling annotation pipelines more efficiently. Mindkosh enables annotators the flexibility to customize each cuboid so that it fits the object being labeled perfectly while also helping speed up the process. The cuboid creation tools that make use of AI, mentioned in this article, are precisely what Mindkosh offers to help teams produce cleaner and more consistent labels.

Conclusion

For teams building perception systems with LiDAR data, the quality of annotations directly shapes how well models understand the physical world. When cuboids are inconsistent or poorly aligned with objects in the point cloud, those small inaccuracies can propagate through the dataset and affect model performance.

Improving LiDAR annotation accuracy, therefore, begins with improving the cuboid annotation workflow itself. Giving annotators the flexibility to accurately represent objects—while providing tools that guide cuboid creation—helps teams produce cleaner and more consistent labels across large datasets.

Modern annotation platforms are designed to support these workflows by making cuboid creation faster, more consistent, and easier to scale. With tools such as 1-click cuboid generation, lasso-based object selection, and configurable cuboid templates, Mindkosh helps teams generate high-quality LiDAR annotations while maintaining the flexibility needed to represent real-world scenes accurately.

If your organization relies on LiDAR data and is looking to improve annotation accuracy while scaling dataset production, request a demo to see how Mindkosh can support your annotation workflow.