Light Detection and Ranging (LiDAR) technology has revolutionized how machines perceive and interact with their environment. By emitting laser pulses and measuring the time they take to return, LiDAR systems generate detailed 3D representations of surroundings—referred to as point clouds. These point clouds have become instrumental in fields like autonomous driving, robotics, aerial mapping, and real-time object detection.

As machine learning and artificial intelligence (AI) systems become more reliant on 3D perception, the quality of the training data becomes paramount. Accurate annotation of LiDAR data is not just helpful—it's essential. Machine Learning models for object detection, scene understanding, and path planning hinge on the precision of these annotations. However, unlike 2D images, LiDAR data brings unique and complex challenges to the table. Annotating millions of sparse and irregular points per frame is no trivial task.

This blog explores the key challenges that define LiDAR data annotation and sheds light on the innovations and solutions emerging to address them.

Complexity of LiDAR data

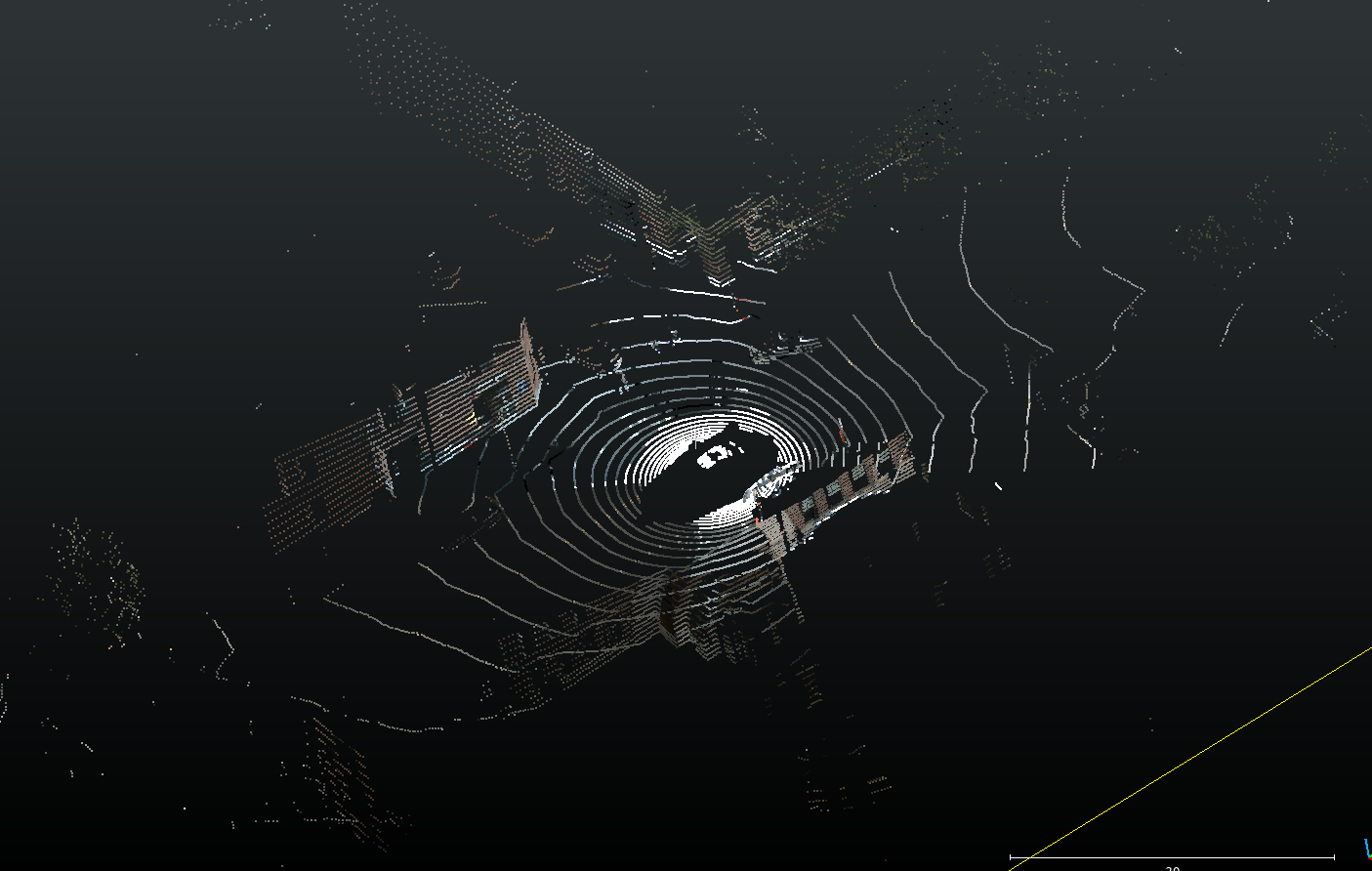

LiDAR data is structurally different from images or video. It produces point clouds—collections of data points in a three-dimensional coordinate system, often consisting of millions of individual points per frame. These points capture geometric and spatial information about surfaces, objects, and structures in the scene.

Key challenges in complexity:

- High Resolution: Modern LiDAR systems produce detailed scans, generating millions of points per frame. While this is valuable for precision, it also drastically increases processing time and annotation complexity.

- Sparsity and Density: Point clouds are often sparse, especially at longer ranges. Yet, in urban environments, they can be densely packed—like around intersections or marketplaces—making segmentation harder.

- Dynamic Environments: Scenes are rarely static. Moving vehicles, people, animals, and varying object shapes demand an adaptive annotation approach.

- Elevation and Occlusion: Objects appear at different elevations and may occlude one another, making context-based labeling critical.

The sheer volume and variety of data introduce a fundamental layer of difficulty before annotation even begins.

Annotation process challenges

Annotating LiDAR data isn't as simple as drawing boxes or polygons in images. Due to its 3D nature, every annotation must consider depth, orientation, and scale. This makes the process time-consuming, prone to inconsistencies, and heavily reliant on expert annotators.

Labeling point clouds

In 2D images, pixels have defined neighbors. Each pixel has 8 neighbours which are spatially co-related. But in 3D space, every point exists independently unless inferred by context. This means understanding which points belong to an object is not straight-foward, and requires the right viewing angle and experience working with point clouds.

- Context Awareness: Annotators must label each point based on 3D geometry and its relationship to the surrounding scene.

- Obstruction & Partial Visibility: Like in images, objects in 3D point clouds can also be obstructed by other objects. For example, cars partially hidden behind bushes or pedestrians walking behind signboards can create ambiguity and difficulty in understanding the object and its dimensions.

- Sparse Regions: Point density in point clouds drops rapidly with distance from the Lidar sensor. In areas where LiDAR points are thinly spread (far away from the Lidar sensor), it's hard to interpret object boundaries, leading to potential mislabels.

- Crowded areas: In busy scenes, separating closely placed objects becomes a tedious, highly detailed task.

Cuboid annotation

Cuboids—or 3D bounding boxes—are used to define objects like cars, people, and bicycles in 3D space. Cuboid annotation is vastly more complicated to perform than its 2D counterpart - bounding boxes. This is due to the following issues:

- 3D Complexity: A 2D bounding box needs just two opposite points to completely define it. In contrast, a 3D bounding box can be only be defined with either the 8 vertices of the cuboid, or the center, the 3 dimensions and the orientation in 3 spatial axes. This makes it very time consuming to label accurately.

- Irregular Shapes: Many real-world objects have curves or irregular geometry (e.g., trees or construction machinery), which means they do not fall neatly within simple cuboid-friendly shapes.

- Partial Occlusion: Determining full object boundaries when only a part is visible is difficult. This becomes specially challenging when labeling objects farther away from the Lidar sensor.

- Crowded Scenes: In traffic-heavy environments, placing cuboids without overlaps and ensuring they correctly represent depth and orientation is especially tough.

Segmentation labeling

Semantic segmentation involves assigning a label to every point in the point cloud — like "vehicle," "road," "tree," or "pedestrian." This is similar to segmentation in 2D images where every pixel is assigned a label. However, due to the 3D nature of the data, point cloud segmentation is more difficult to get right.

- Pixel-Level Accuracy in 3D: It demands the same granularity as 2D segmentation but across an additional dimension.

- Sensor Noise: Variations in reflectance and surface properties (like glass or metal) distort readings.

- Ambiguous Categories: Differentiating between similar-looking objects—like motorcycles and bicycles, or benches and boxes—is often subjective without context. Unlike images, most point cloud do not have color information, which makes it difficult to accurately identify the right class for an object.

- Separating ground: While this is not a big problem with cuboid annotation, identifying which points belong to the object, and which belong to the ground its standing on is difficult and time consuming.

Noise, outliers and other variabilities in point clouds

LiDAR systems are susceptible to environmental interference. Weather, lighting, and surface reflectance can introduce noise and outliers into the data. This presents more problems when annotating point clouds.

Sources of noise:

- Environmental Effects: Rain, snow, and fog scatter laser beams, creating ghost points or blank zones.

- Reflective Surfaces: Mirrors, windows, and shiny vehicle surfaces can generate erroneous points.

- Motion Blur: Movement during scanning can warp object shapes, leading to distorted point clusters. While this is usually not a problem when viewing individual scans, this can be a big problem when point clouds are created by accumulating data from multiple scans.

Addressing the issue:

There are some measures that can help alleviate some of the problems. However, note that despite these measures, noise and outliers remain significant barriers to consistent, high-quality annotation.

- Statistical Outlier Removal (SOR): Filters out sparse or inconsistent points based on mean distances.

- Voxel Grid Filtering: Reduces data complexity by averaging points within cubic volumes (voxels).

Other factors affecting annotation

Real-world environments are unpredictable and constantly changing. This variability has a direct impact on the clarity and consistency of LiDAR data.

- Weather Conditions: Fog, rain, and snow scatter LiDAR beams, reducing data quality.

- Lighting Conditions: Night-time or backlit environments complicate data interpretation, even though LiDAR is not dependent on ambient light.

- Dynamic Objects: Annotating moving pedestrians, cyclists, or vehicles in successive frames requires temporal consistency.

- Terrain and Elevation: Hills, slopes, and uneven terrain add further challenges in placing accurate 3D labels.

Effective annotation pipelines must be built to handle edge cases and continuously adapt to real-world scenarios.

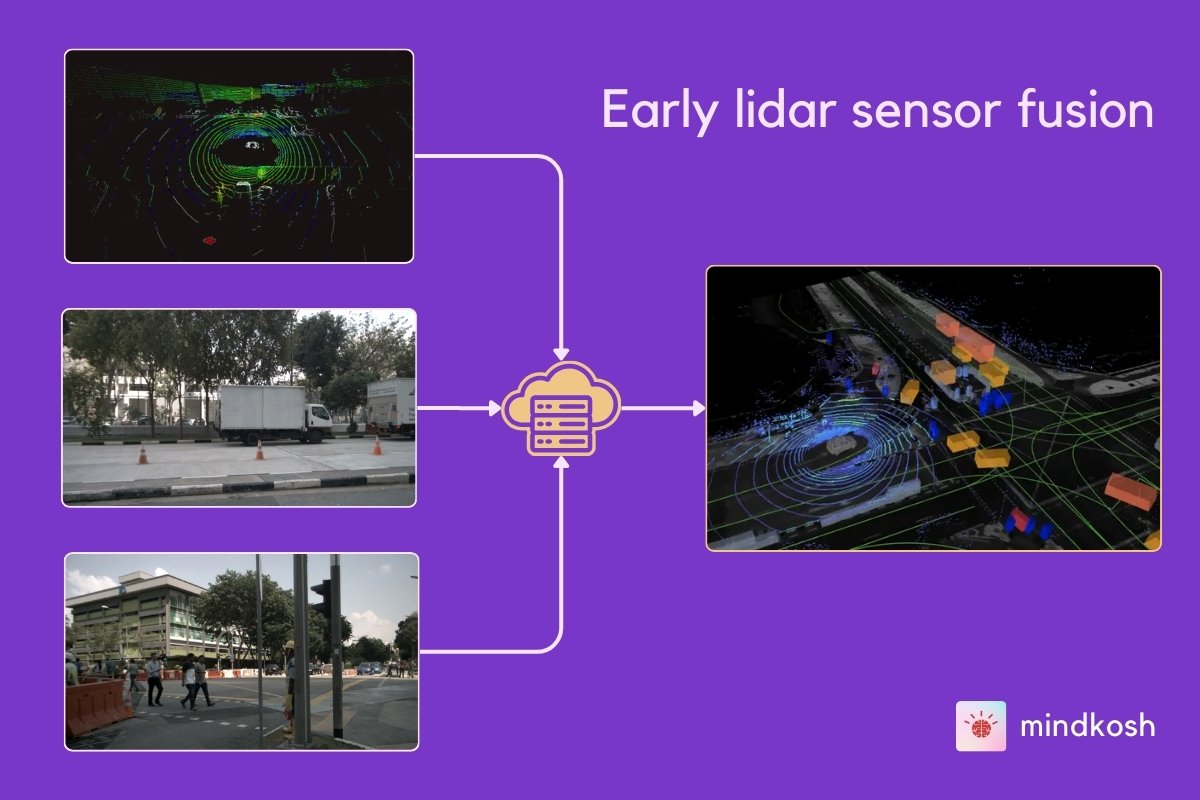

Multi-sensor integration

Many perception systems combine LiDAR with cameras, radar, or inertial sensors to gain a richer understanding of the environment. This process, called Sensor fusion provides more benefits than processing each data stream separately.

Benefits:

- Improved Object Recognition: Visual texture from cameras complements LiDAR’s geometric precision.

- Robustness in Variable Conditions: Radar can detect objects even in fog or rain, where LiDAR may falter.

Integration challenges:

- Time Synchronization: Sensors must capture data at the exact moment. Even milliseconds of drift can skew results.

- Coordinate System Alignment: Each sensor has its own reference frame. Proper calibration is essential to merge data correctly.

- Annotation Alignment: Labels must be consistent across modalities—a misaligned bounding box in LiDAR and camera views can degrade model performance.

Annotation tools that support sensor fusion need to be equipped with calibration metadata and smart projection capabilities, which adds to system complexity.

High-quality annotation requirements

To train effective AI models for real-time decision-making, the quality of LiDAR annotations cannot be compromised.

What does high-quality annotation entail?

- Precision at Scale: Every object—small or large—must be labeled with geometric accuracy.

- Expert Annotators: Understanding 3D geometry and object behavior is critical. Training annotators takes time and effort.

- Multi-Pass QA: Quality control through multiple validation rounds ensures consistency across datasets.

- Advanced Tooling: Features like smart interpolation, class-based filtering, and AI-assisted pre-labeling help accelerate and refine the process.

However, achieving this level of detail is expensive and time-consuming, especially when dealing with large-scale datasets for enterprises or research labs.

Labeling tools to alleviate the problems

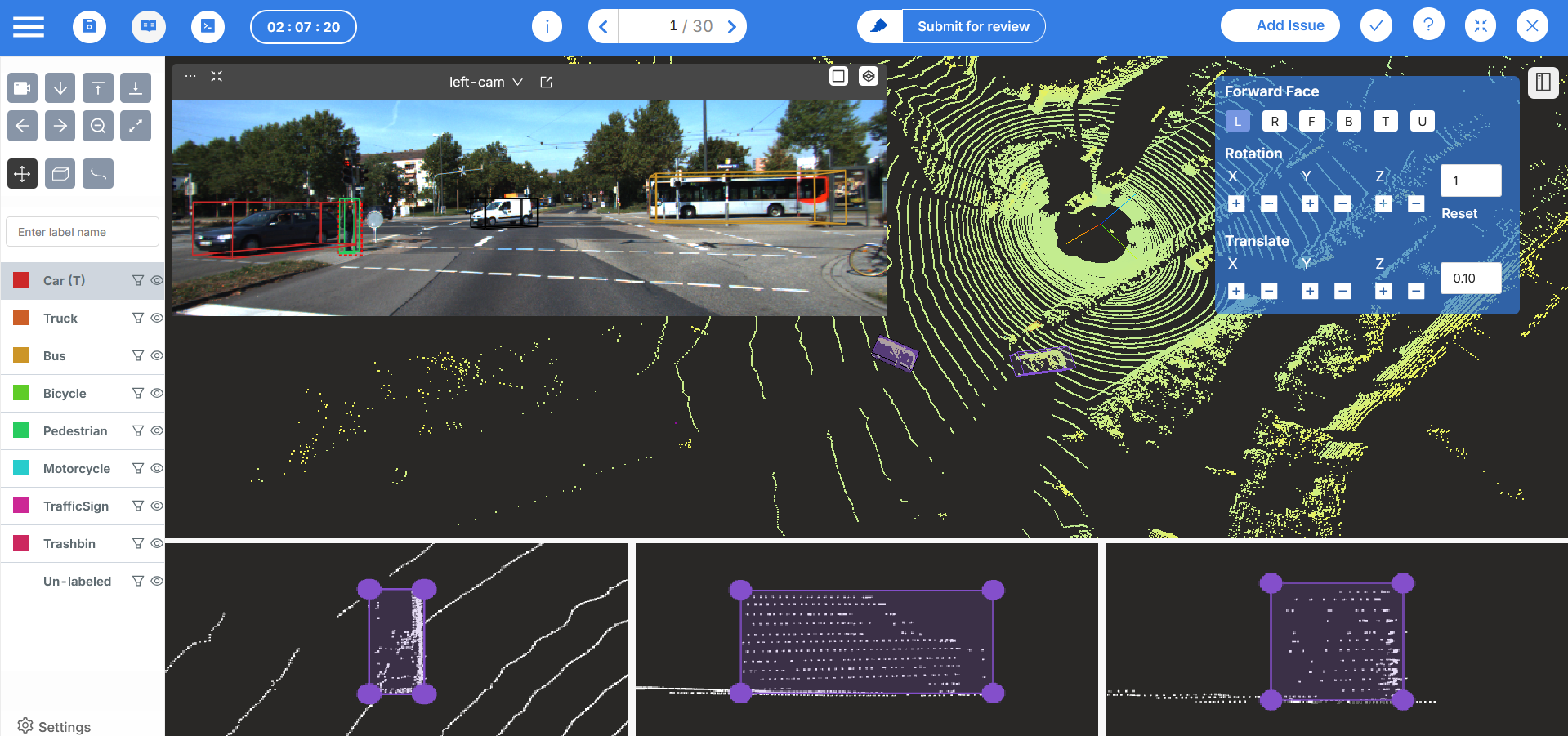

Modern labeling tools have evolved significantly to address the challenges described above and offer sophisticated solutions that can dramatically improve both efficiency and accuracy. Here is how an annotation platform like Mindkosh helps labeling teams prepare high quality labeled datasets:

AI Assistance

Many labeling platforms now incorporate Machine Learning based tools that can automatically detect and pre-label common objects like vehicles, pedestrians, and road infrastructure. This significantly reduces the manual effort required and provides a consistent starting point for human annotators to refine. For example, Mindkosh offers interactive segmentation for images and a 1-click annotation tool for point cloud data.

3D Visualization and Navigation

Mature labeling platforms allow annotators to view point clouds from multiple angles, zoom in on fine details, and navigate through complex scenes intuitively. This addresses the spatial complexity challenge by making it easier to understand object boundaries in 3D space. For example Mindkosh supports loading large dense pointclouds, allows you to filter objects and colors point clouds based on intensity values or height.

Integration with Multiple sensors

A key feature that can instantly improve the quality of labeling is the ability to fuse LiDAR data with cameraimages, providing annotators with richer context that vastly improves labeling accuracy and reduces ambiguity in challenging scenarios. Because point density drops when moving away from the Lidar sensor, having camera images as context can be a great help to identify objects.

Collaborative Workflows and Quality Control

Good annotation tools support communication channels, customizable annotation workflows and Quality Assurance pipelines. This helps maintain consistency across large datasets and reduces subjective interpretation errors. On Mindkosh, our Issue management system lets you create tickets with the right context and brings all stakeholders together. We also support multi-annotator setup, honeypot and a flexible annotation workflow.

Efficient Annotation tools

A lot of the complexity can be reduced with more efficient annotation tools. Interpolation is a common technique that reduces labeling time by filling in annotations based on manual annotations created by an annotator. On Mindkosh, we have several such tools like creating cuboids of a predefined size, locking the dimensions of an object so they don't change from frame to frame, creating segmentation object from a cuboid and many others.

Experienced labeling teams

While a good Annotation tool can be the difference between a good and bad labeled dataset, another huge factor is the capability of the labeling team. All labeling teams are not made equal, and when labeling complex multi-sensor data, its extremely important that the labelers have prior experience and training for labeling such data. If you are looking for such a team, let us know - we can help!

Conclusion

LiDAR data annotation lies at the heart of modern 3D perception systems—but it is far from straightforward. Annotators must wrestle with challenges that range from the inherent complexity of point clouds to the variability of real-world conditions, all while ensuring millimeter-level precision.

However, this space is rapidly evolving. AI-assisted annotation tools, semi-automated pipelines, and collaborative platforms are beginning to bridge the gap between complexity and efficiency. Tools like those developed by Mindkosh and other AI annotation companies leverage automation, machine learning, and cloud collaboration to streamline large-scale LiDAR annotation.

To meet the growing demand for autonomous vehicles, smart cities, and advanced robotics, the ecosystem needs ongoing investment—into better tools, smarter workflows, and skilled human capital. LiDAR annotation may be one of the most challenging aspects of AI development today, but with innovation and persistence, it’s a challenge we’re well on the way to overcoming.