Human-in-the-Loop Definition

Human-in-the-Loop (HITL) is a machine learning approach where humans continuously review, correct, and guide AI outputs to improve model accuracy and performance over time.

It creates a feedback loop where AI systems learn from human input and become more reliable with each iteration.

In 2026, HITL is widely used in the following areas:

- Computer vision

- Large language models (LLMs)

- AI evaluation and quality assurance workflows

In simple terms, HITL is a system where human judgment helps AI learn faster, reduce errors, and handle real-world complexity more effectively.

What is Human-in-the-Loop (HITL)?

"Human-in-the-Loop" (HITL) refers to a collaborative approach in artificial intelligence where humans play an active and ongoing role in the training, evaluation, and improvement of machine learning models. Rather than treating AI as a fully autonomous system, HITL acknowledges a fundamental reality: machines are powerful, but they are not perfect. They lack context, intuition, and the ability to interpret ambiguity in the way humans can. This is where human involvement becomes critical. In a HITL system, humans label and annotate data, review model predictions, correct errors and provide feedback that guides learning. Over time, this interaction fosters a system that not only automates tasks but also continuously improves through collaboration.

A beneficial way to understand HITL is through a simple analogy. Imagine teaching a child to recognize objects. The child makes guesses, receives corrections, and gradually improves. AI models work in a similar way—except the “teacher” is a human annotator or reviewer.This iterative learning process is what makes HITL especially valuable in domains where data is complex or ambiguous, mistakes carry high consequences, and context matters more than raw computation.

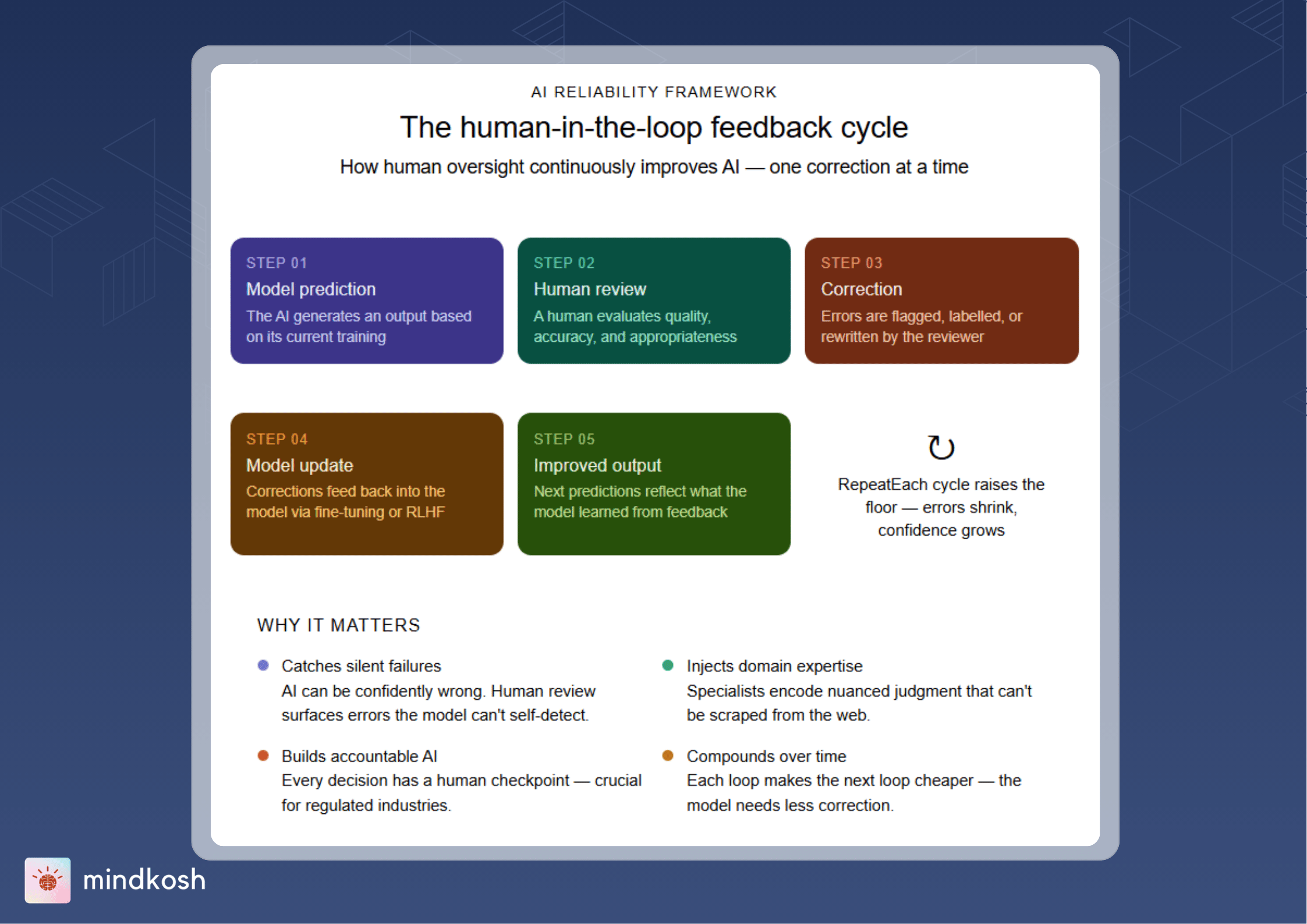

How Human-in-the-Loop Works (Step-by-Step)

At its core, human-in-the-loop is built around a simple but powerful cycle.

The process typically unfolds like this. First, the model processes input data and generates predictions. These predictions could take many forms—classifying images, generating text, or identifying patterns in data. Then a human reviews the output. This step is crucial because it introduces judgment, context, and domain expertise into the system. If the model makes mistakes, the human corrects them.

These corrections are not just fixes—they are learning signals. After that, the corrected data is then fed back to the system, allowing the model to update its understanding. Over time, this leads to improved accuracy and performance. Finally, the model is redeployed, now better equipped to handle similar tasks in the future.

To summarize this process:

- The model predicts

- Humans review

- Errors are corrected

- Feedback is incorporated

- The model improves

This loop repeats continuously, creating a system that evolves with every interaction.

The HITL Feedback Loop (Why It Matters)

One of the defining features of human-in-the-loop systems is the feedback loop. HITL systems are dynamic, unlike static models that undergo a one-time training and deployment process. They learn continuously from human input, which allows them to adapt to new data, edge cases, and changing requirements.

This loop is powerful because it enables continuous improvement rather than one-time training, faster correction of errors, better handling of rare or ambiguous cases, and alignment with real-world expectations.

The more structured and consistent this loop is, the more effective the system becomes. In practice, this means that HITL is not just about adding humans into the process—it is about designing a system where human feedback drives meaningful improvement.

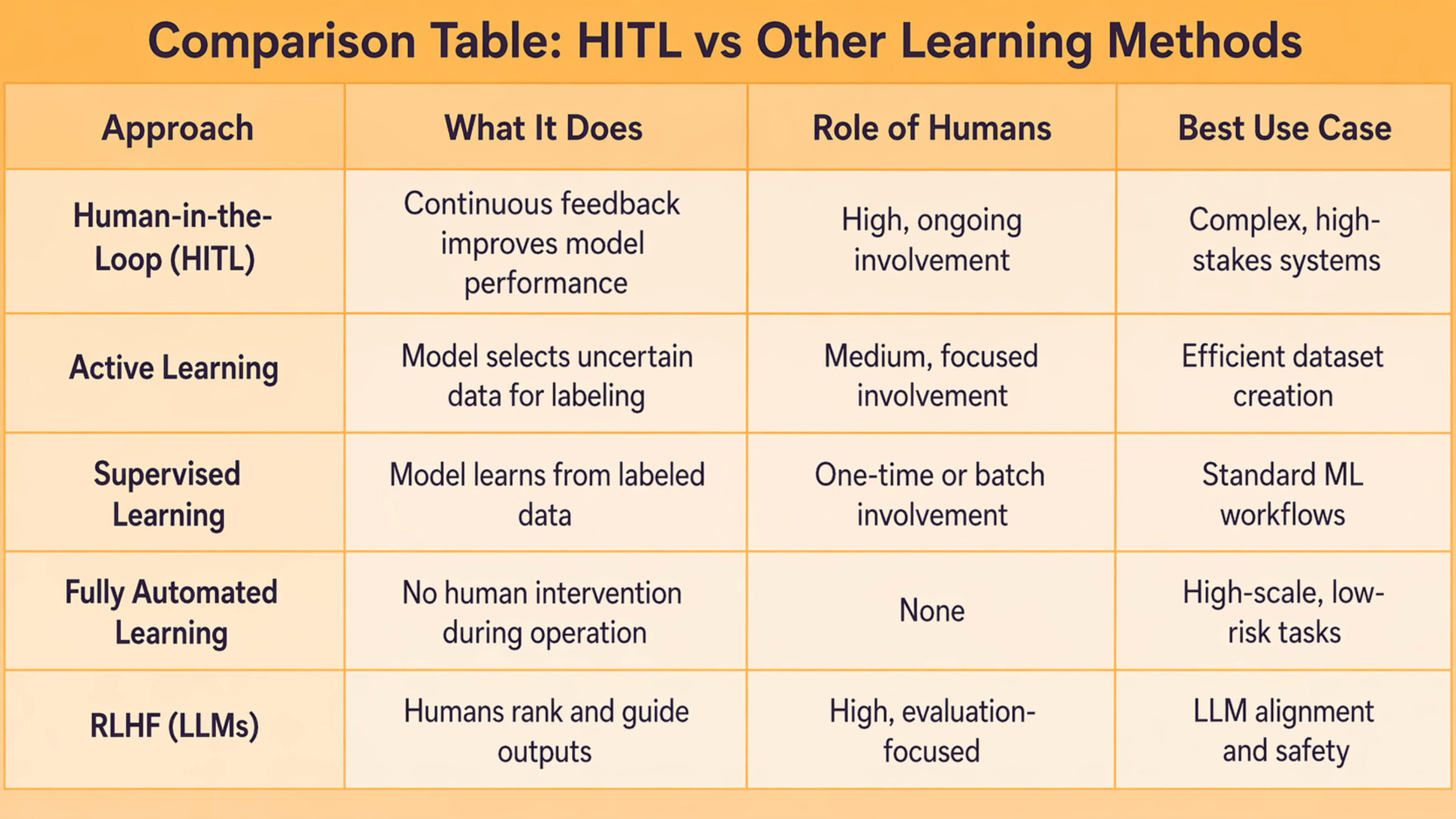

Human-in-the-Loop vs Other Learning Approaches

Human-in-the-loop is often discussed alongside other machine learning approaches, which can create confusion. Understanding how these methods differ—and how they relate—helps clarify where HITL fits.

The key distinction is that HITL is not just a technique—it is a broader framework.

Active learning, supervised learning, and RLHF can all exist within an HITL system. What defines HITL is the continuous presence of human feedback throughout the model's lifecycle.

Where Human-in-the-Loop is Used Today (2026)

Human-in-the-loop has become a foundational approach across multiple AI domains. Its applications have expanded significantly, especially with the rise of generative AI.

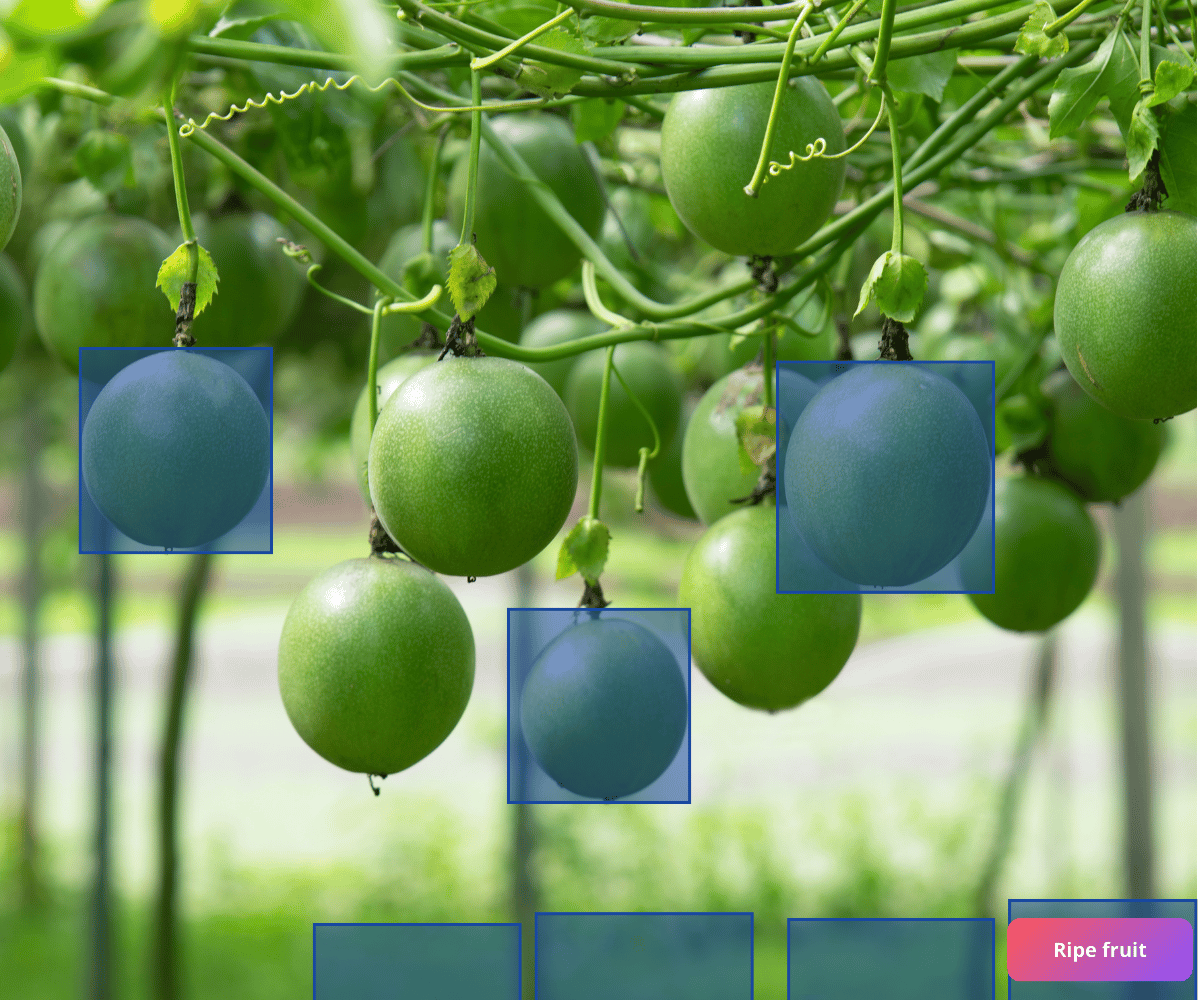

Computer Vision

In computer vision, HITL plays a central role in training and refining models.

Common applications include:

- Image and video annotation

- Object detection and segmentation

- Medical imaging analysis

- Autonomous systems validation

Humans help ensure that visual data is labeled accurately, which directly impacts model performance.

LLMs & Generative AI (The Biggest Shift in 2026)

One of the most important developments in recent years is the role of HITL in large language models.

Unlike traditional ML systems, LLMs generate open-ended outputs. This makes human evaluation essential.

HITL in LLMs includes:

- Reviewing generated responses

- Ranking outputs based on quality

- Providing feedback on helpfulness and accuracy

- Improving prompt effectiveness

- Identifying hallucinations or incorrect responses

This process is often implemented through reinforcement learning workflows, where human feedback directly shapes model behavior.

As AI systems become more conversational and generative, HITL becomes less optional and more foundational.

AI Quality Assurance & Operations

Beyond training, HITL is also critical in production environments.

Teams use it to:

- Validate predictions before deployment

- Monitor model performance

- Detect and fix edge cases

- Maintain consistency across large datasets

In these scenarios, HITL acts as a safety layer, ensuring that AI systems perform reliably in real-world conditions.

Benefits of Human-in-the-Loop AI

The value of HITL comes from its ability to combine the strengths of humans and machines.

Some of the key benefits include:

- Improved model accuracy

Continuous human corrections help reduce errors over time - Better handling of edge cases

Humans can interpret rare or ambiguous scenarios - Reduced bias

Human oversight helps identify and mitigate bias in datasets and outputs - Increased trust and reliability

Systems become more dependable when humans are involved - Faster learning cycles

Feedback accelerates model improvement

These advantages make HITL especially important in applications where precision and trust are critical.

Limitations of HITL

Despite its benefits, HITL also introduces trade-offs that need to be managed.

Some of the key challenges include:

- Slower workflows

Human involvement can introduce delays - Higher costs

Skilled annotators and reviewers require investment - Human error

Mistakes in labeling or feedback can affect model performance - Scalability constraints

Managing large-scale human workflows can be complex

Understanding these limitations is important for designing effective HITL systems that balance accuracy with efficiency.

When Should You Use HITL?

Not every AI system requires human-in-the-loop workflows. The decision depends on the nature of the task and the level of accuracy required.

Use HITL when:

- Accuracy is critical

- Data is complex or ambiguous

- The model is in early stages of development

- Human judgment is necessary

Avoid heavy HITL when:

- Tasks are repetitive and predictable

- Speed and scale are the primary priorities

- The system has already reached high reliability

The goal is not to maximize human involvement, but to use it strategically where it adds the most value.

Example: A Simple HITL Workflow

To understand how HITL works in practice, consider a basic example.

An AI model is trained to identify cars in images. Initially, the model makes several mistakes—it mislabels objects or misses certain cases.

Human reviewers step in and correct these errors. They adjust labels, identify edge cases, and provide feedback.

This corrected data is then used to retrain the model.

Over time:

- The number of errors decreases

- The model becomes more confident

- Human intervention is needed less frequently

This cycle continues until the model reaches an acceptable level of performance.

How to Implement HITL (Without Overcomplicating It)

Implementing HITL does not require a complex setup. The key is to focus on structure and clarity.Start by defining clear labeling and review guidelines. This ensures consistency across human reviewers.Next, use AI-assisted pre-labeling to reduce manual effort. Let the model do the first pass, and use humans for validation and correction.

Introduce structured review layers. This could include quality checks, second-level reviews, or approval workflows.Finally, track improvements over time. Measure how human feedback impacts model performance and adjust your process accordingly.The goal is to create a system where humans and AI work together efficiently, rather than slowing each other down.

Human-in-the-Loop in Practice

While the concept of human-in-the-loop sounds straightforward, implementing it effectively at scale is where most teams struggle, because the real challenge is not just adding human review but ensuring that feedback is consistent, efficient, and actually improves model performance.

In real-world machine learning workflows, poor annotation quality often becomes a major bottleneck, leading to incorrect model learning, mislabeled edge cases, slower training cycles, and compounding errors over time, especially in high-stakes industries where accuracy is critical. This is why modern HITL systems are shifting toward a QA-first approach, where quality checks are embedded directly into the workflow rather than applied after the fact, allowing teams to detect issues early and focus effort where it matters most.

Platforms like Mindkosh operationalize this by introducing structured validation mechanisms such as multi-annotator workflows that compare outputs and surface disagreements, honeypot tasks that measure annotator accuracy in real time, and reviewer interfaces that prioritize edge cases instead of requiring full dataset reviews, ensuring that only high-confidence data moves forward.

Alongside this, additional layers such as issue tracking for ambiguous data, annotator performance monitoring, and AI-assisted labeling help strengthen the feedback loop while reducing manual effort, making the system both scalable and efficient. Ultimately, what this demonstrates is that successful human-in-the-loop systems are not defined simply by the presence of humans, but by how well their input is structured, measured, and integrated into the learning process, turning HITL from a manual intervention into a reliable engine for continuous improvement.

Conclusion

Human-in-the-loop AI represents a shift in how we think about intelligent systems. Rather than aiming for full automation, the most effective AI systems today are built on collaboration—combining machine efficiency with human judgment.

As AI continues to expand into areas like generative models and real-world decision-making, the importance of HITL will only grow.

The future of AI is not just automated. It is interactive, adaptive, and human-guided. In that collaboration lies the key to building systems that are not only powerful but also reliable and trustworthy.