The data bottleneck in AV development

For teams building cutting-edge autonomous systems for Self driving vehicles, Robotics or Industrial Automation, high-quality labeled data isn’t just a nice-to-have — it’s the foundation for perception, planning, and control systems. As your dataset scales to millions of LiDAR frames, camera images, radar sequences, and GPS metadata, the question inevitably arises:

Should we build our own in-house annotation tool or use a purpose-built platform like Mindkosh?

While building your own tool might seem like the flexible, cost-efficient route, the true picture is more nuanced — and often, more expensive. While building your own annotation tool might have been the only real option 5-6 years ago, today annotation tools have matured, and you don't have to develop your own in-house tool. In this post, we’ll unpack the Cost, Flexibility, and Quality trade-offs involved in the build-vs-buy decision, especially for AV teams working with complex, multi-sensor datasets.

The three key dimensions: Cost, Flexibility, and Quality

Let’s break down what truly matters when choosing between building or buying.

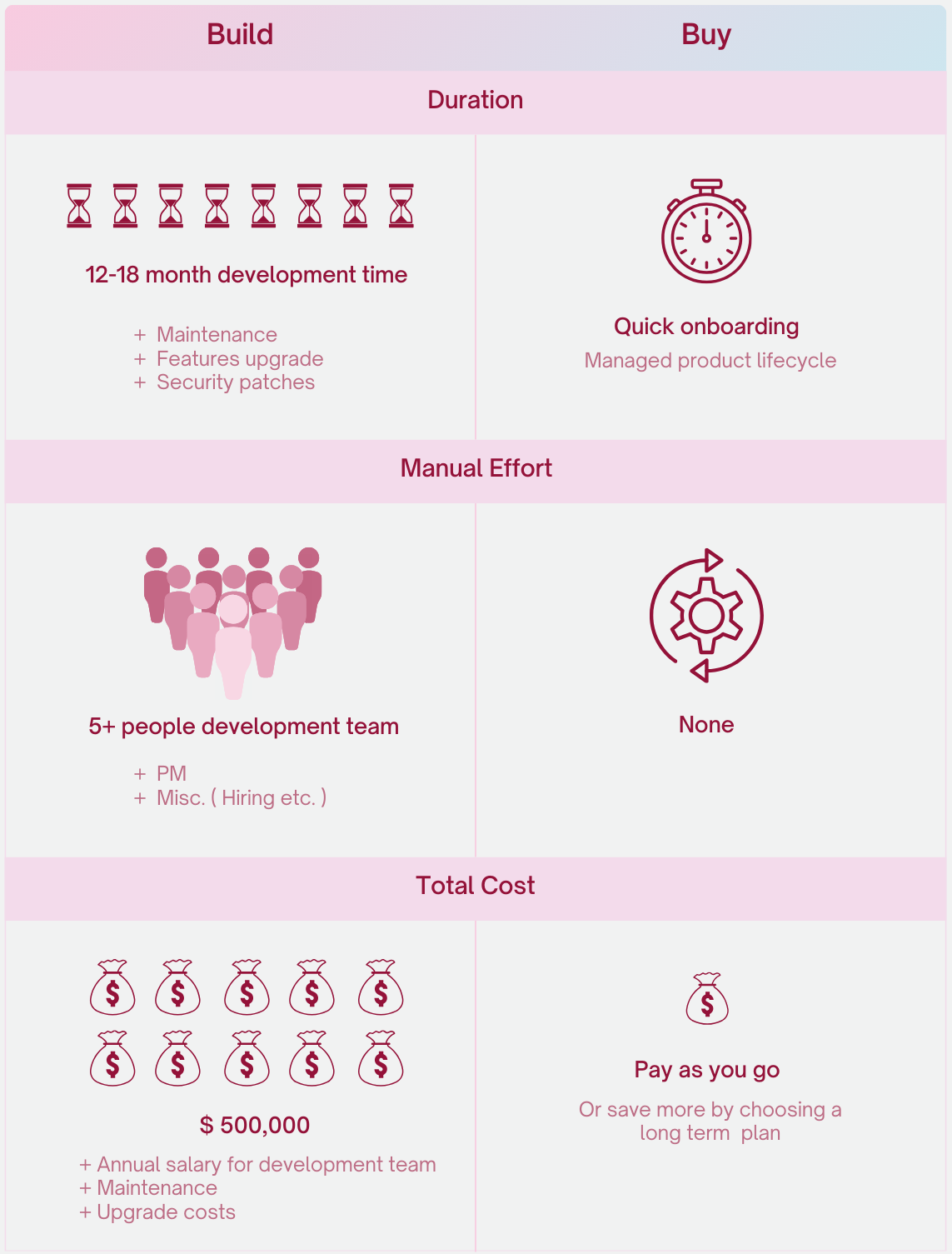

Cost: The hidden price you pay for "free"

What building looks like:

- Hiring frontend and backend devs to build 3D/2D annotation tools.

- Acquiring and maintaining infrastructure for data management, versioning, and storage.

- Dealing with Maintenance overhead, bug fixing, DevOps, and user support.

- Build out annotation tools for images as well as other sensors like Lidar, that require working with advanced 2D/3D graphics.

- Identifying the required workflows for data as well as annotation, and building a flexible annotation flow.

However, as is always the case with any long-term development project, this is just the tip of the iceberg. There are many other hidden costs. Some of them are:

- Months/years of developer time (often > 6 months for a basic MVP)

- Opportunity cost — every week spent on internal tooling is a week not spent on your core product.

- Technical debt when use cases evolve faster than the tool can. This happens much more often you would think, and is one of the most common reasons many teams abandon their internal annotation tool efforts. Since most developers working on the project don't have experience with annotation pipelines, they will likely make decisions that either don't scale, or don't anticipate how the annotation requirements might change in the future.

On the other hand, if you were to go with an exiting platform, like Mindkosh, you get:

- Out-of-the-box support for 3D Point clouds, images, videos and radar with a variety of formats.

- Support for single channel images like Depth maps and Thermal maps, and the ability to choose colormaps and overlay them over RGB images.

- Built-in QA workflows, detailed Analytics, issue tracking and more.

- Automation: keyframe interpolation, auto-labeling, and interactive segmentation.

- Cost-effective scaling — add as much data and as many users you want, and pay only for what you need.

So, building looks cheaper, until it delays your roadmap and eats into R&D resources.

Flexibility: Control vs Capability

Building an in-house tool does gives you full control - you get to design annotation flows and UI that is specific to your own requirements. But it comes with a huge trade-off: you have to build every feature from scratch. While you have the freedom to build anything you want, you have to build at least the following:

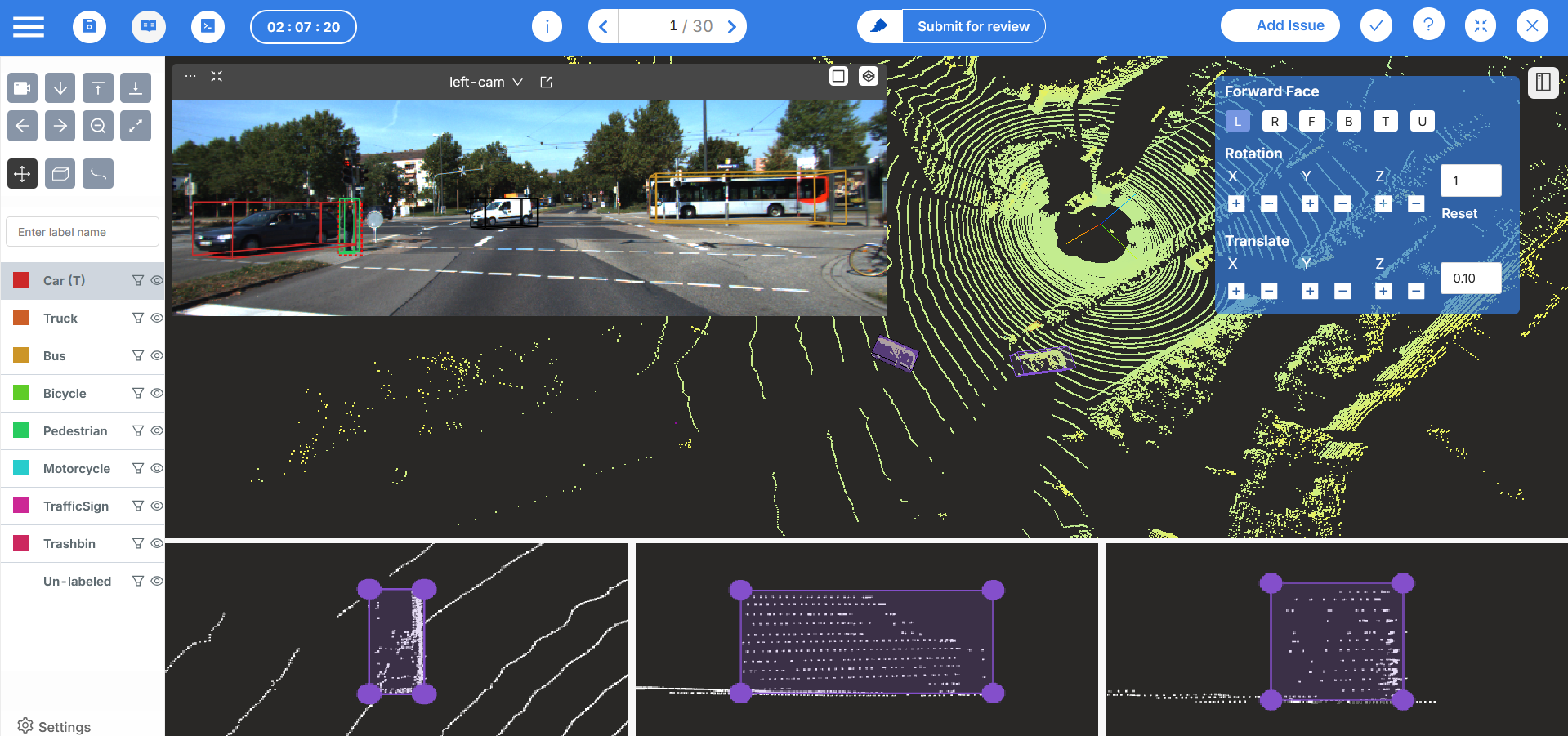

Custom UIs for LiDAR and camera fusion

At the very least you will need to design UIs that can handle images of different types, annotations of different types as well as 3D point clouds, which can be specially challenging. Having reference images along with 3d point cloud data, like for sensor fusion use cases, add even more complexity. And if your data also includes videos, that adds yet another layer of complexity, since videos are not easy to handle.

Reviewer tools and performance metrics

If you deal with large volumes of data and a relatively large labeling team (anything larger than 10-15 people), you will almost definitely need to build tools to manage the team, analyze their performance to identify bottlenecks and to allow efficient Quality checking. More on this last one in the next section.

Data management

You will also need to design systems that can ingest large amounts of data - whether that's through the UI or through an API. And also allow users to search through the data, make smaller subsets, and export annotations when the labeling is complete. If you also want to label Lidar data and the reference images together, this becomes more difficult since you have to find ways to connect the point clouds with their corresponding camera images.

Annotation workflows

You will also need to decide what your annotation workflow looks like. How many levels of checking do you need your data to go through? Does the final Quality Check happen on all data, or a subset? What happens if some data does not pass the quality check? Also, do you want to bake these flows into the tool or do you want to have the flexibility to have different flows for different tasks?

This list is not exhaustive, and there will likely be many other things you will have to build. So while you can potentially build a tool tailored to your unique requirements, unless you’re planning to run a second company just for tooling, this level of customization is hard to sustain.

On the other hand, if you were to go with an existing platform, like Mindkosh you can :

- Import your data using the UI, Python SDK or connect your existing Cloud storage providers to the platform.

- Export annotations in a variety of formats and maintain versions.

- Customize annotation workflows, add complex label ontology, and reviewer logic.

- Integrate your own ML models or data pipelines into the annotation process using Python SDK.

- Manage multiple labeling teams and restrict access with user roles.

- Get engineering support for tailored integrations.

So, buying doesn’t necessarily mean giving up flexibility — especially when the annotation platform is built to adapt.

Quality: The make-or-break factor

The best perception models are only as good as their training data. Ultimately the one thing you want out of your annotation projects is high quality Labeled Data. And the hardest thing to maintain at scale? Annotation quality. And while you may be tempted by the fact you can build bespoke Quality check systems in your in-house tool, this is not straight-forward, and requires experience with how labeling teams actually go about labeling the data.

At the very least you will need to built tools to :

- Allow your labeling team to have a desired annotation workflow.

- Put your data in different stages of annotation - Labeling, Validation, QC etc.

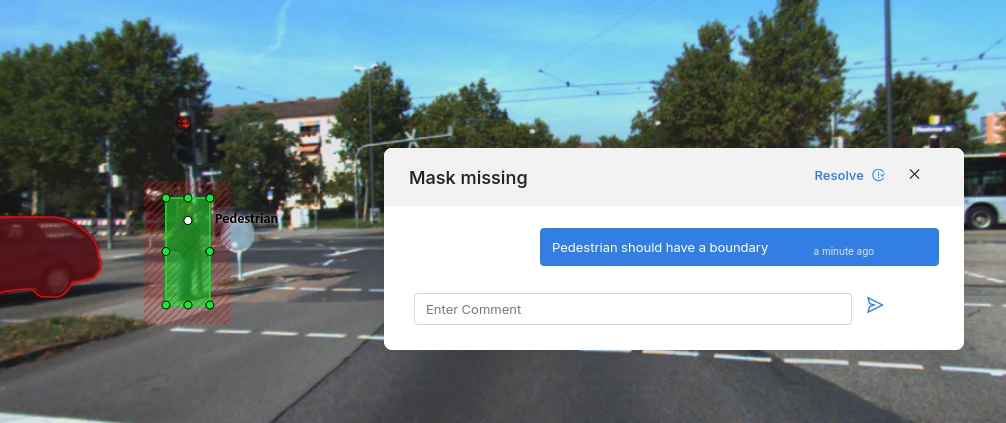

- Allow communication between the labeling team and other stakeholders. This is potentially the most aspect necessary to ensure high quality annotations. Labeling teams will often have questions about how to label certain data points. This is specially true at the beginning of a labeling project, but will generally be the case through out the lifetime of the project, as they encounter edge-cases - scenarios that might not have been anticipated by your team, but happen occasionally in the real world. Having a system that allows communication between all stakeholders while providing the right context facilitates this, while also providing an efficient way for Quality Checkers to pass on feedback to the labeling team.

- Build labeling tools such that common mistakes are avoided. This is a subtle but very important aspect, that only gets highlighted when labeling teams have already labeled a lot of data. For example, we observed that when labeling point cloud sequences, some frames can have very few points visible from an object, while another might have the entire object visible. If you label each object separately, the dimensions of the object are going to be guessed by the labeling team in frames where it is not properly visible. However, dimensions of an object don't usually change through time - a car is still the same size as it moves along the road. So we added the ability to 'Lock' the dimensions of an object. So you can change the dimensions of an object when its best visible, and those will be copied to all other frames automatically. This ensures that your annotations have consistent dimensions.

On the other hand, with an existing platform, like Mindkosh, you get:

- Customizable workflows, with Annotation, Validation, QC and completed modes, and ability to assign different people to different stages and batches.

- Multi-annotator validation and scoring.

- Setting up Honey-pot so you can Quality check a few samples and automatically calculate Quality metrics based on the changes suggested by you.

- Issue tracking and comment threads with ability to attach issues to a region, annotation or the entire frame to provide important context.

It can take a while to get the workflows and Quality check tools in place, as it requires experience. This is lost time, that could otherwise use to build your core products. And while these are being added, you risk stockpiling error-ridden datasets.

When does building your own annotation tool make sense?

So does building your tool never make sense? No. While in most cases, its better to tap into existing tooling, sometimes you just have to. You might consider building your own annotation tool if:

- You have a large in-house engineering team (8+ devs).

- Have experience labeling lots of data and are aware of workflows, data requirements, common issues encountered during labeling etc.

- Your annotation needs are highly unique and not covered by any platform.

- You’re prepared for 8–18 months of development before production use.

- You’re comfortable owning long-term maintenance, updates, and QA evolution.

Everyone else? You’re probably better off focusing on what matters — building your core product — and letting Mindkosh handle the labeling.

Conclusion: Focus on models, not tooling

Building a labeling tool may feel like control. But in practice, it’s a time sink, a cost center, and a constant distraction from your core mission: making autonomous systems safe, smart, and production-ready.

With Mindkosh, you don’t sacrifice flexibility — you gain reliability, speed, and precision. Our platform is built for AV annotation at scale — and ready to support your edge cases, workflows, and evolving datasets from day one.

Curious about custom workflows or integrations? Get in touch with the Mindkosh team and see how we can support your AV journey.