The next wave of artificial intelligence won’t live on your screen. It will move through warehouses, navigate streets, assist workers, and make decisions in real-world environments.

Often without direct human control! This shift is what many are beginning to call Physical AI.

If you’ve tried to research the term, you’ve likely encountered two extremes. On one end, highly technical explanations from infrastructure providers focus on tools and implementation. On the other hand, high-level discussions explore societal implications without offering operational clarity. For businesses evaluating an opportunity, neither fully answers the core question: What is Physical AI, and how can it create value?

In this blog, we describe a clear and practical Physical AI definition from first principles — not as a technical specification, but as a strategic concept that can be acted on. We break down Physical AI into concrete capabilities that can be integrated into your business strategy. We explore how it differs from traditional robotics, where it is already delivering value, walk through the sectors seeing real traction today, explore the data infrastructure that makes these systems work, and how organizations can prepare to adopt it. Finally, we outline how businesses can position themselves early in this emerging ecosystem to capture long-term advantage.

What is Physical AI? (A clear definition)

Physical AI is the convergence of artificial intelligence with systems that operate in the physical world — machines that can perceive their environment through sensors, reason about what they are perceiving, make decisions in real time, and take autonomous action, with minimal or no human intervention.

At its core, Physical AI is not just about intelligence.The AI that has dominated headlines over the past few years, large language models, image generators, recommendation engines — processes digital inputs and produces digital outputs. Physical AI on the other hand is about intelligence that can take action in real environments.

Traditional AI systems operate in digital environments. They process structured inputs—text, images, or numbers—and return outputs within software systems. Physical AI extends this loop into the real world:

- It captures real-world data through sensors

- It interprets that data using AI models

- It makes decisions in real time

- It executes actions physically

- It learns continuously from outcomes

This creates a closed loop between perception → decision → action → feedback.

Unlike static automation systems, Physical AI systems evolve through experience. They are not limited to predefined instructions but adapt to changing environments.

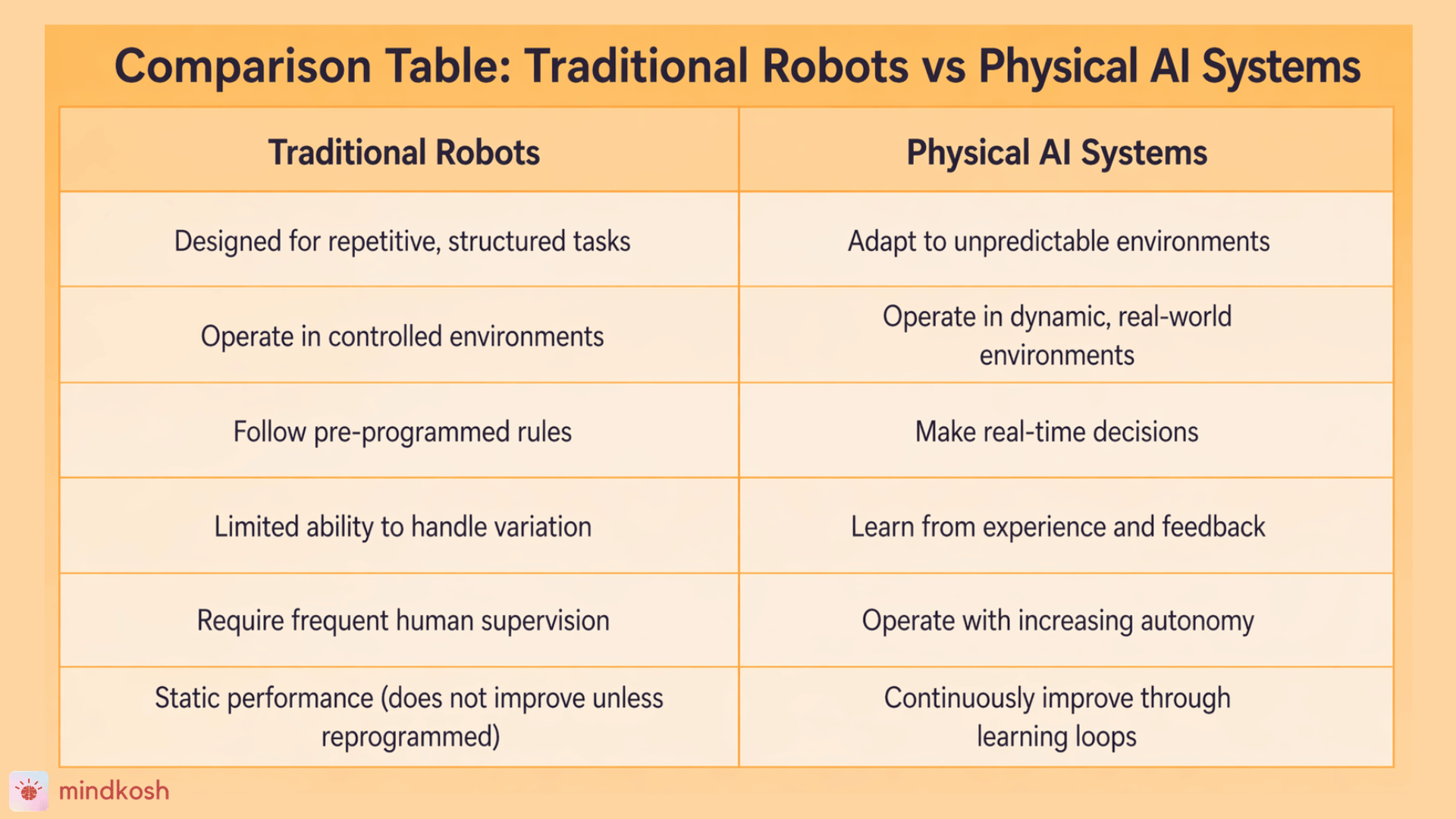

What makes it different from the robots we already know

This is where many business discussions go sideways. People hear "Physical AI" and immediately picture the industrial robots that have operated on factory floors for decades—the articulated arms welding car frames or placing components on assembly lines with extraordinary precision and speed.

Those robots are impressive, but they are not Physical AI. The distinction matters enormously.

Traditional industrial robots are programmed for specific, repetitive tasks in controlled environments. They execute instructions. If the environment changes — a component arrives at a slightly different angle, a new product needs to be handled, or an obstacle appears on the floor—they fail, stop, or require a human to intervene and reprogram them. Their value is in consistency within a known domain. Their limitation is an almost total lack of adaptability.

Physical AI systems are designed around the opposite principle: adaptability in unknown or changing environments. Rather than following instructions, they learn from data. Rather than being programmed for a specific task, they develop generalizable capabilities that can transfer across situations.

The difference is not the hardware—it is the intelligence layer.

A robotic arm on an assembly line becomes a Physical AI system when it can:

- Identify new objects without reprogramming

- Adjust grip dynamically

- Optimize performance based on past outcomes

This is why Physical AI is often described not as a replacement for robotics but as an evolution of it.

How Physical AI Works

Physical AI works by combining real-world perception, AI-driven decision-making, and continuous learning into a single loop that enables autonomous action.

Unlike traditional automation, which follows fixed rules, Physical AI systems operate in dynamic environments. For example, a warehouse robot that follows a set path is automation—but one that can reroute, avoid obstacles, and optimize delivery in real time is using Physical AI. The difference is the shift from rule-based systems to learning systems.

These systems rely on real-world, multimodal sensor data.

Real-world sensor data refers to all forms of input that help a system understand its environment. This includes:

- Visual data (cameras)

- Depth data (LiDAR)

- Egocentric data and keypoint annotation

- Motion and velocity (radar)

- Spatial orientation (IMUs)

- Environmental signals (temperature, pressure, sound)

At the core is a continuous learning loop: sensors capture real-world data, AI models interpret it, actions are executed, and outcomes are fed back into the system. This allows performance to improve over time in live environments.

This is why training data is critical. The quality of sensor data and its annotation directly impacts how well these systems perform. In Physical AI, better data doesn’t just support the system—it defines it.

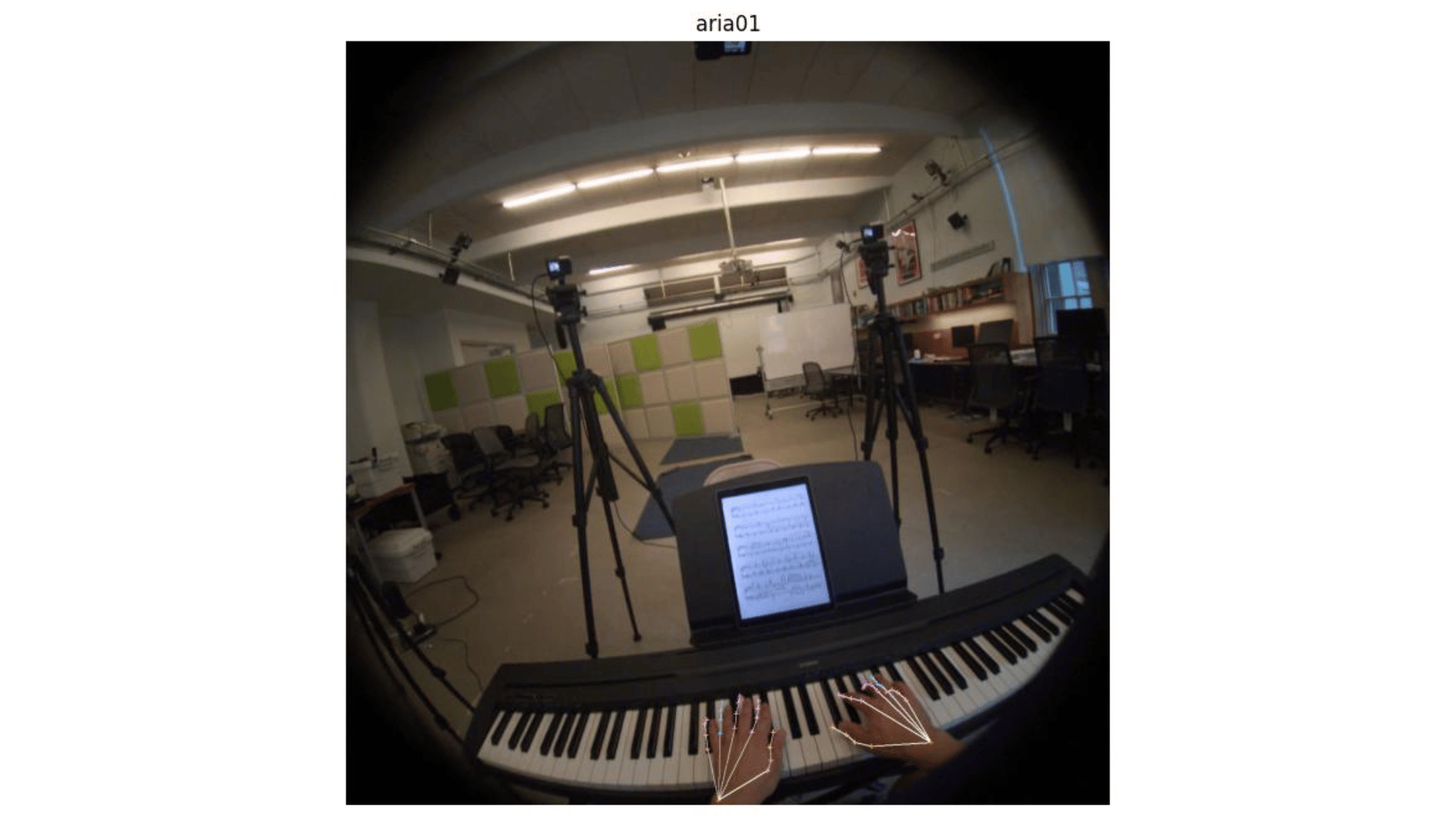

Egocentric Data: The Next Frontier in Physical AI

One of the fastest-growing data types in Physical AI is egocentric data—data captured from a first-person perspective, typically through wearable cameras or sensors mounted on robots.

This type of data is critical for training systems that need to understand human actions, intent, and fine-grained interactions with the physical world—from robotic assistants to industrial training systems.

Unlike traditional vision data, egocentric data requires keypoint annotation to be truly useful. This involves labeling fine-grained human movements—hand positions, joint coordinates, object interactions—frame by frame.

For Physical AI systems operating alongside humans, this level of detail is not optional. It is what enables:

- Accurate action recognition

- Human-robot collaboration

- Context-aware decision-making

This is also where annotation complexity increases significantly. Multi-frame tracking, occlusions, and real-world variability make egocentric datasets one of the most challenging to label at scale.

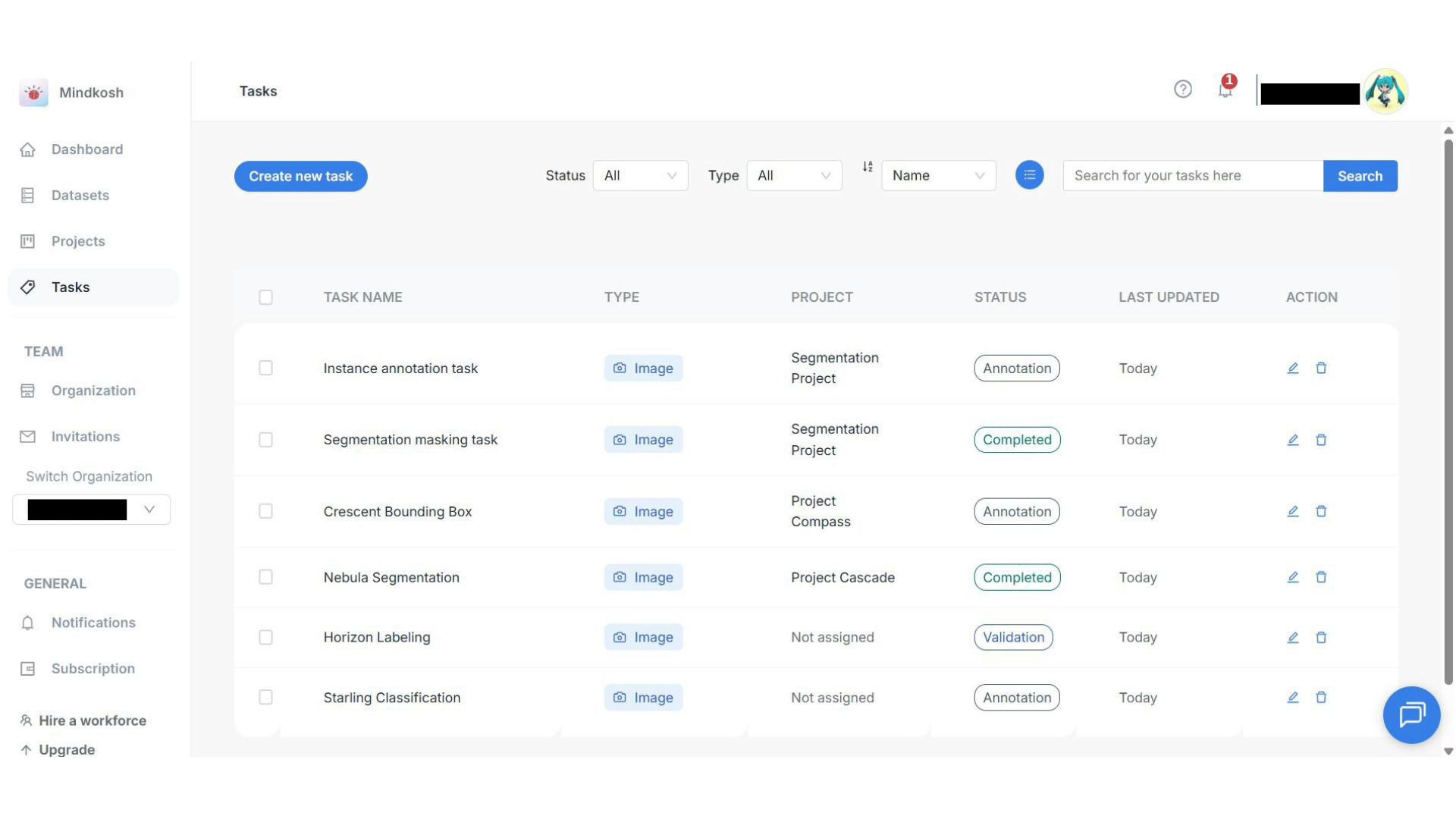

Platforms like Mindkosh are built to handle this level of complexity—supporting precise keypoint labeling across multi-sensor and video data pipelines.

This picture fromEgo-Exo4D shows Egocentric data with hand keypoint annotation, enabling a detailed understanding of human actions in Physical AI systems.

Where Physical AI Is Creating Business Opportunity Today

Physical AI is not a 2030 story. Early deployment is already underway across several industries, and the commercial case is being made in real operations.

Manufacturing and industrial operations are the clearest proving ground. This Deloitte report published in early 2026 notes that over 500,000 industrial robots were deployed globally in 2024 alone, with that figure projected to reach 700,000 annual installations by 2028. The shift from traditional automation to Physical AI is already visible in how these systems are being procured and configured — buyers are increasingly looking for adaptability, not just throughput.

Logistics and warehousing are other sectors seeing rapid traction. Amazon's own data from its next-generation fulfillment center in Shreveport, Louisiana, found that advanced robotics required 30% more employees in reliability, maintenance, and engineering roles — a finding that directly challenges the dominant narrative about automation and job loss.

Healthcare is an emerging and commercially significant frontier. Robotic surgical systems have been in operating theaters for years, but Physical AI is expanding the scope. Autonomous systems now handle medication delivery, sanitation, and patient mobility support in hospitals, freeing clinical staff for higher-value tasks. The da Vinci surgical platform—the market leader in robotic-assisted surgery—now has over 11,000 installed systems globally, with procedure volume growing 17% year-on-year as of full-year 2025, according to Intuitive Surgical's Q4 2025 earnings report.

Agriculture and infrastructure are earlier-stage but growing markets. Autonomous inspection drones and precision agriculture robots are all operating commercially in various markets today.

Autonomous vehicles remain one of the most significant long-term opportunities and one of the most capital-intensive. Waymo's commercial robotaxi service in San Francisco—which operated in pilot mode for almost three years before launch—now operates 3,000 robotaxis across multiple US cities, completing 500,000 paid rides per week as of early 2026. It is the most mature real-world deployment of Physical AI at scale, and its trajectory illustrates the sector's central challenge: near-perfect performance is required before deployment is commercially and regulatorily viable, which places extraordinary demands on training data quality.

Mass production of humanoid robots is no longer a concept — the first dedicated factory is already in the pipeline. Source: Tweaktown

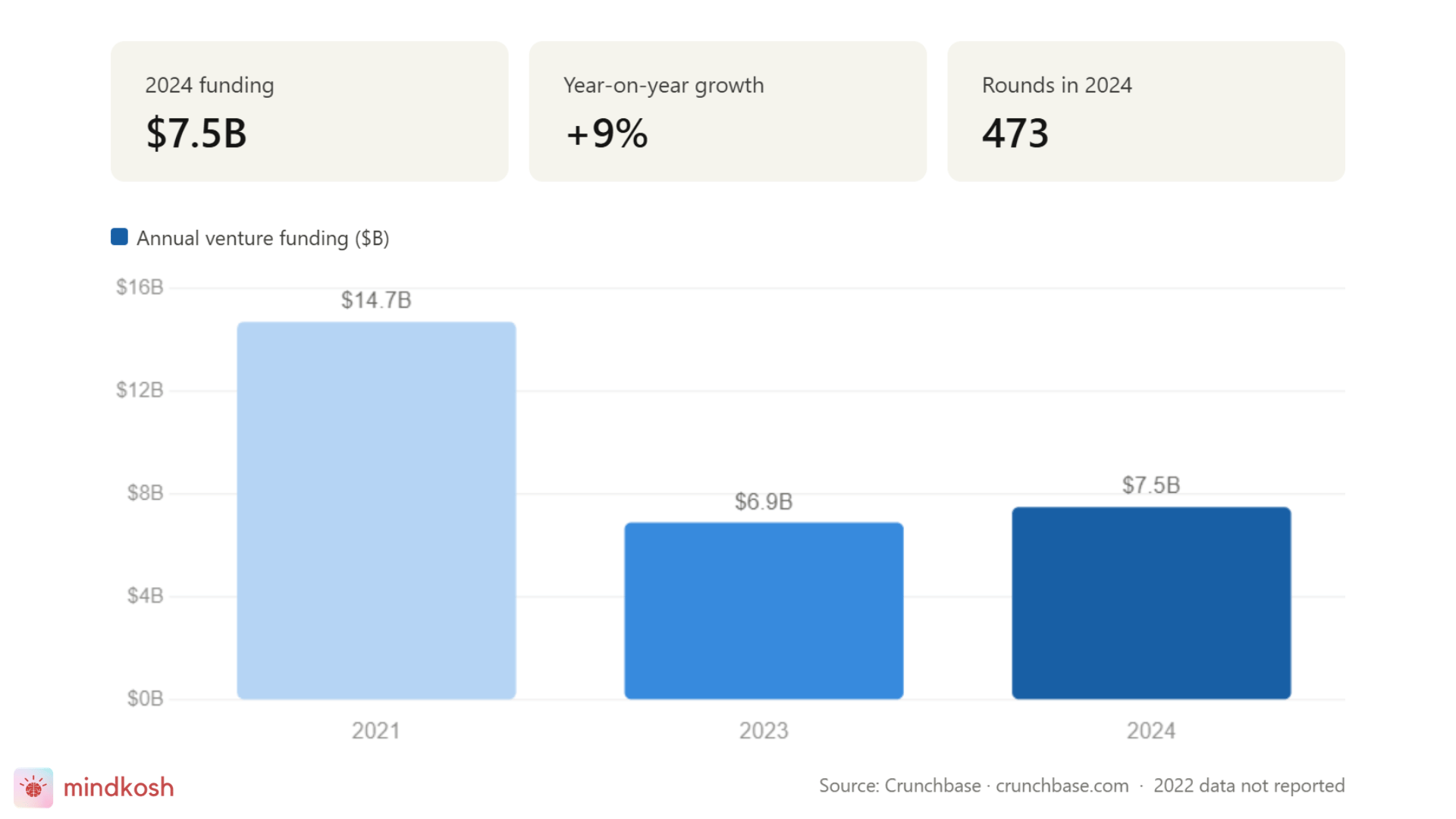

The market numbers you need to know

The scale of what is being built here is not incremental. Morgan Stanley projects the humanoid robot market could reach $5 trillion by 2050 — potentially twice the size of the global auto industry.

Today, just 5% of firms report that Physical AI is transforming their organization. But 41% expect it will be within three years. That gap between current adoption and anticipated impact represents a window—and for businesses in adjacent industries, it represents a window to establish position before the market consolidates.

Venture funding tells the clearest story. After a historic peak of $14.7 billion in 2021 — driven by post-pandemic automation optimism — investment corrected sharply. But it did not disappear. By 2023, it had settled at $6.9 billion, and in 2024, it grew again to $7.5 billion, according to Crunchbase (shown in the diagram below). Crucially, the number of funding rounds fell from 671 to 473 over that same period — meaning capital is concentrating into fewer, larger bets. That is not a market losing confidence. That is a market maturing.

Major bets from Jeff Bezos, Google, and others into companies building the foundational intelligence layer of these systems. Tesla and several Chinese EV manufacturers are racing to commercialize humanoid robots. The capital is moving.

What Physical AI really requires: where the gaps are

Physical AI systems are only as effective as the data they are trained on. Unlike digital AI, where large volumes of text and images are readily available, Physical AI depends on high-quality, real-world, multi-sensor data—and this is where the true complexity lies.

These systems require precisely annotated datasets that combine inputs from cameras, LiDAR, radar, and other sensors to reflect how machines perceive the physical world. It’s not just about volume, but accuracy and context. A model guiding an autonomous vehicle, for instance, needs millions of labeled data points across lighting conditions, road types, and edge cases—each frame carefully annotated to ensure reliable decision-making.

This is not a one-time effort. Physical AI operates on a continuous learning cycle, where systems in deployment generate new data—especially from failures and edge cases—that must be captured, labeled, and fed back into training. The gap between a model that works in simulation and one that performs reliably in the real world is almost always a data problem.

For businesses, this makes data infrastructure a core capability. Poor-quality labels lead to poor decisions in the field. High-quality, scalable annotation enables real-world performance.

This is where companies like Mindkosh create strategic value—helping teams structure and label complex, multi-sensor data so Physical AI systems can learn, adapt, and operate reliably at scale.

The Trust Question: Why Safety and Reliability Are Commercial Issues

No serious business conversation about Physical AI can avoid the question of trust.

When the output of a system is a physical action in a shared environment — a robot navigating a hospital corridor, an autonomous vehicle moving through traffic, a drone operating near people — the margin for error is different from anything in conventional software.

This is not a soft concern; if not addressed it could be a hard commercial barrier.

Trust is built through:

- Transparency in decision-making

- Reliable performance across edge cases

- Robust validation and testing

- Clear communication with users

Interestingly, trust is not just a technical challenge—it is also a communication challenge.

Businesses that can clearly articulate how their systems work and how they handle risk will have a significant advantage.

Waymo operated in testing mode for just under 3 years in San Francisco streets before commercialising. That was not regulatory friction — that was trust-building as a product strategy.

The trust cycle runs through data. Systems that are trained on comprehensive, accurately labeled data fail less frequently and recover more gracefully when they encounter edge cases. The investment in training data quality is, in practical terms, an investment in the safety and reliability of the deployed system. This reframes data annotation from a backend task into a risk management function that directly impacts whether a product can be deployed, insured, and scaled

The emerging workforce conversation reinforces this point. As the World Economic Forum's analysis of Physical AI in manufacturing notes, the transition creates new skilled roles — robot technicians, AI trainers, autonomous systems coordinators — rather than simply eliminating existing ones. Companies that position themselves thoughtfully in the Physical AI ecosystem, whether as deployers, enablers, or data partners, are not just chasing a market opportunity; they are shaping what that market looks like.

How to think about positioning

Physical AI is not a single product or a single technology. It is an ecosystem — and like all ecosystems, it has layers. There are companies building the hardware (the robots themselves), companies building the AI models (the intelligence), companies providing the infrastructure (sensors, compute, cloud), and companies providing the data that makes the models work.

The biggest mistake companies make is assuming they need to build everything from scratch.

Instead, a smarter approach is to:

- Identify high-impact use cases within existing operations

- Evaluate where autonomy can reduce inefficiencies

- Start with pilot deployments

- Invest early in data infrastructure

The goal is not immediate transformation, but progressive capability building.

For CEOs in or adjacent to automation, the question is no longer whether Physical AI matters—the adoption curve is already underway. The real question is where your business creates value within this ecosystem, and whether you are positioned to capture it.

If you’re building automation systems—robots, autonomous vehicles, drones—your advantage will increasingly come down to model performance, which is ultimately driven by data quality. Your annotation pipeline is not a support function; it is a competitive asset.

If you operate in industries being reshaped by Physical AI—manufacturing, logistics, healthcare, construction—the opportunity lies in early adoption. Those who integrate these systems into workflows now will shape operations around them, rather than being forced to adapt later.

If you’re investing or building in this space, the biggest opportunities remain in the enabling infrastructure—data operations, simulation, validation, and edge systems—where the market is still fragmented and evolving.

Conclusion

Physical AI represents the evolution of artificial intelligence from passive analysis to active participation in the real world. By combining real-world sensory data, adaptive learning systems, and autonomous decision-making, it enables machines to operate beyond controlled environments and into dynamic, unpredictable settings.

For businesses, this is not just a technological advancement—it is a shift in how operations can be designed, optimized, and scaled. Those who invest early in understanding its capabilities, building the right data infrastructure, and identifying practical use cases will be best positioned to lead in this new era of automation.

As the definition of Physical AI continues to evolve, one thing is clear: the competitive advantage will belong to organizations that treat it not as a futuristic concept, but as a present-day capability to be explored, tested, and refined.

At Mindkosh, we work with automation companies building exactly this kind of capability. Our multi-sensor annotation platform is designed for the complexity that Physical AI demands—precise, scalable, and built for the data types that autonomous systems actually produce. If you are building in this space and want to discuss what your training data pipeline needs to look like, we would be glad to start that conversation.